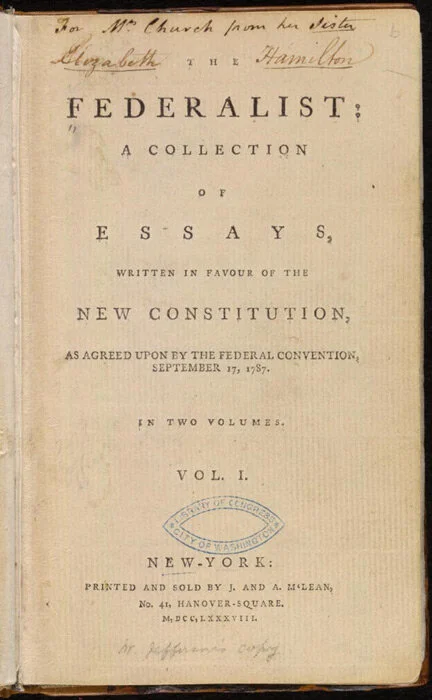

The Federalist Papers #1: Alexander Hamilton's Plea for Reasoned Debate

For most of the time I have been blogging, I have been blogging every other Sunday on political philosophy. At a paragraph or a few paragraphs per blog post, so far I have blogged my way through John Stuart Mill’s On Liberty and John Locke’s Second Treatise on Government. Here are the two aggregator posts leading to the relevant links:

Today is my first post on The Federalist Papers, which is where I am turning next.

#1 in The Federalist Papers is a plea by Alexander Hamilton (using his pen-name Publius) for reasoned debate. In our own time of heightened political passions, it has a great deal of wisdom for us. Alexander Hamilton proffers the following ideas. (I draw the text below from this website.) Before each passage (indented) I put in bold my brief summary of that passage and in italics a representative excerpt from the passage.

A. Great good for the world (and honor for those deciding) will accompany a good decision: “… whether societies of men are really capable or not of establishing good government from reflection and choice …”

To the People of the State of New York:

AFTER an unequivocal experience of the inefficiency of the subsisting federal government, you are called upon to deliberate on a new Constitution for the United States of America. The subject speaks its own importance; comprehending in its consequences nothing less than the existence of the UNION, the safety and welfare of the parts of which it is composed, the fate of an empire in many respects the most interesting in the world. It has been frequently remarked that it seems to have been reserved to the people of this country, by their conduct and example, to decide the important question, whether societies of men are really capable or not of establishing good government from reflection and choice, or whether they are forever destined to depend for their political constitutions on accident and force. If there be any truth in the remark, the crisis at which we are arrived may with propriety be regarded as the era in which that decision is to be made; and a wrong election of the part we shall act may, in this view, deserve to be considered as the general misfortune of mankind.

This idea will add the inducements of philanthropy to those of patriotism, to heighten the solicitude which all considerate and good men must feel for the event….

B. Collectively making a reasoned choice is difficult: The plan offered to our deliberations affects too many particular interests … not to involve … views, passions and prejudices little favorable to the discovery of truth.

… Happy will it be if our choice should be directed by a judicious estimate of our true interests, unperplexed and unbiased by considerations not connected with the public good. But this is a thing more ardently to be wished than seriously to be expected. The plan offered to our deliberations affects too many particular interests, innovates upon too many local institutions, not to involve in its discussion a variety of objects foreign to its merits, and of views, passions and prejudices little favorable to the discovery of truth.

C. Power-hungry men whose power is furthered by a weak federal government will oppose the proposed Constitution: “the perverted ambition … of men, who … flatter themselves with fairer prospects of elevation from the subdivision of the empire”

Among the most formidable of the obstacles which the new Constitution will have to encounter may readily be distinguished the obvious interest of a certain class of men in every State to resist all changes which may hazard a diminution of the power, emolument, and consequence of the offices they hold under the State establishments; and the perverted ambition of another class of men, who will either hope to aggrandize themselves by the confusions of their country, or will flatter themselves with fairer prospects of elevation from the subdivision of the empire into several partial confederacies than from its union under one government.

D. However, many people will hold the wrong opinion honestly, and others will hold the correct opinion for impure motives: “… much of the opposition … will spring from … the honest errors of minds led astray by preconceived jealousies and fears”

It is not, however, my design to dwell upon observations of this nature. I am well aware that it would be disingenuous to resolve indiscriminately the opposition of any set of men (merely because their situations might subject them to suspicion) into interested or ambitious views. Candor will oblige us to admit that even such men may be actuated by upright intentions; and it cannot be doubted that much of the opposition which has made its appearance, or may hereafter make its appearance, will spring from sources, blameless at least, if not respectable--the honest errors of minds led astray by preconceived jealousies and fears. So numerous indeed and so powerful are the causes which serve to give a false bias to the judgment, that we, upon many occasions, see wise and good men on the wrong as well as on the right side of questions of the first magnitude to society. This circumstance, if duly attended to, would furnish a lesson of moderation to those who are ever so much persuaded of their being in the right in any controversy. And a further reason for caution, in this respect, might be drawn from the reflection that we are not always sure that those who advocate the truth are influenced by purer principles than their antagonists. Ambition, avarice, personal animosity, party opposition, and many other motives not more laudable than these, are apt to operate as well upon those who support as those who oppose the right side of a question. Were there not even these inducements to moderation, nothing could be more ill-judged than that intolerant spirit which has, at all times, characterized political parties. For in politics, as in religion, it is equally absurd to aim at making proselytes by fire and sword. Heresies in either can rarely be cured by persecution.

E. Generous attitudes toward partisan opponents are unlikely to prevail: A torrent of angry and malignant passions will be let loose…. to increase the number of their converts by the loudness of their declamations and the bitterness of their invectives.

And yet, however just these sentiments will be allowed to be, we have already sufficient indications that it will happen in this as in all former cases of great national discussion. A torrent of angry and malignant passions will be let loose. To judge from the conduct of the opposite parties, we shall be led to conclude that they will mutually hope to evince the justness of their opinions, and to increase the number of their converts by the loudness of their declamations and the bitterness of their invectives. An enlightened zeal for the energy and efficiency of government will be stigmatized as the offspring of a temper fond of despotic power and hostile to the principles of liberty. An over-scrupulous jealousy of danger to the rights of the people, which is more commonly the fault of the head than of the heart, will be represented as mere pretense and artifice, the stale bait for popularity at the expense of the public good. It will be forgotten, on the one hand, that jealousy is the usual concomitant of love, and that the noble enthusiasm of liberty is apt to be infected with a spirit of narrow and illiberal distrust….

F. Populism is a greater danger to liberty than a strong central government: “… a dangerous ambition more often lurks behind the specious mask of zeal for the rights of the people than under the forbidden appearance of zeal for the firmness and efficiency of government.”

On the other hand, it will be equally forgotten that the vigor of government is essential to the security of liberty; that, in the contemplation of a sound and well-informed judgment, their interest can never be separated; and that a dangerous ambition more often lurks behind the specious mask of zeal for the rights of the people than under the forbidden appearance of zeal for the firmness and efficiency of government. History will teach us that the former has been found a much more certain road to the introduction of despotism than the latter, and that of those men who have overturned the liberties of republics, the greatest number have begun their career by paying an obsequious court to the people; commencing demagogues, and ending tyrants.

G. I am, indeed, in favor of the proposed Constitution; let me spell out my arguments: “I frankly acknowledge to you my convictions, and I will freely lay before you the reasons on which they are founded.”

In the course of the preceding observations, I have had an eye, my fellow-citizens, to putting you upon your guard against all attempts, from whatever quarter, to influence your decision in a matter of the utmost moment to your welfare, by any impressions other than those which may result from the evidence of truth. You will, no doubt, at the same time, have collected from the general scope of them, that they proceed from a source not unfriendly to the new Constitution. Yes, my countrymen, I own to you that, after having given it an attentive consideration, I am clearly of opinion it is your interest to adopt it. I am convinced that this is the safest course for your liberty, your dignity, and your happiness. I affect not reserves which I do not feel. I will not amuse you with an appearance of deliberation when I have decided. I frankly acknowledge to you my convictions, and I will freely lay before you the reasons on which they are founded. The consciousness of good intentions disdains ambiguity. I shall not, however, multiply professions on this head. My motives must remain in the depository of my own breast. My arguments will be open to all, and may be judged of by all. They shall at least be offered in a spirit which will not disgrace the cause of truth.

I propose, in a series of papers, to discuss the following interesting particulars [bullet formatting added; all-caps in original]:

THE UTILITY OF THE UNION TO YOUR POLITICAL PROSPERITY

THE INSUFFICIENCY OF THE PRESENT CONFEDERATION TO PRESERVE THAT UNION

THE NECESSITY OF A GOVERNMENT AT LEAST EQUALLY ENERGETIC WITH THE ONE PROPOSED,

TO THE ATTAINMENT OF THIS OBJECT THE CONFORMITY OF THE PROPOSED CONSTITUTION TO THE TRUE PRINCIPLES OF REPUBLICAN GOVERNMENT

ITS ANALOGY TO YOUR OWN STATE CONSTITUTION and lastly,

THE ADDITIONAL SECURITY WHICH ITS ADOPTION WILL AFFORD TO THE PRESERVATION OF THAT SPECIES OF GOVERNMENT, TO LIBERTY, AND TO PROPERTY.

H. Although few argue directly against the Union of the thirteen states, those against the proposed Constitution must downplay the importance of striving to preserve the Union. “we already hear it whispered in the private circles of those who oppose the new Constitution, that the thirteen States are of too great extent for any general system, and that we must of necessity resort to separate confederacies of distinct portions of the whole.”

In the progress of this discussion I shall endeavor to give a satisfactory answer to all the objections which shall have made their appearance, that may seem to have any claim to your attention.

It may perhaps be thought superfluous to offer arguments to prove the utility of the UNION, a point, no doubt, deeply engraved on the hearts of the great body of the people in every State, and one, which it may be imagined, has no adversaries. But the fact is, that we already hear it whispered in the private circles of those who oppose the new Constitution, that the thirteen States are of too great extent for any general system, and that we must of necessity resort to separate confederacies of distinct portions of the whole. [1] This doctrine will, in all probability, be gradually propagated, till it has votaries enough to countenance an open avowal of it. For nothing can be more evident, to those who are able to take an enlarged view of the subject, than the alternative of an adoption of the new Constitution or a dismemberment of the Union. It will therefore be of use to begin by examining the advantages of that Union, the certain evils, and the probable dangers, to which every State will be exposed from its dissolution. This shall accordingly constitute the subject of my next address.

PUBLIUS.

1. The same idea, tracing the arguments to their consequences, is held out in several of the late publications against the new Constitution.

Conclusion

It is easy to notice that, under the guise of a general introduction, table of contents and exhortation to give a fair hearing, Alexander Hamilton has already inserted many substantive arguments for the Constitution, as well as characterizations of opponents to the Constitution that are less kind than his characterizations of the advocates of the Constitution. Nevertheless, his call to reasonableness and extensive lip service to the idea that his opponents are not all entirely evil is refreshing.

That is not to say that The Federalist Papers #1 has no villains: the final paragraph about division of the Union into pieces being “whispered in private circles” presents the clear picture of an evil conspiracy.

To my mind, the restraint Alexander Hamilton has in attacking his opponents makes those attacks more powerful to those who actually read them than the more brazen political attacks of our day. But in our day, brazenness of political attacks is crucial for getting an attack to spread virally; and it is considered OK if those virally-spread attacks are only heard by those who already lean against those attacked: solidifying one’s base is worth a lot, politically.

One might claim that the expansion of the franchise to all citizens 18 an up means that political appeals need to be dumbed down in our day. That may be. But a wonderful thing about the high intellectual register of the political appeals in The Federalist Papers is that they can still speak to us today. I doubt that the political diatribes of the early 21st century will stand the test of time anywhere near as well.

Update November 10, 2019: Greg Ransom had these comments on Twitter.

All Hallows' Eve

Link to the poem shown above, (which includes quite a bit in a Scottish dialect).

As a child, I remember watching the Peanuts Christmas special, in which Linus talks about the religious meaning of Christmas. What about the religious meaning of Halloween? Despite Linus’s insistence in the importance of the Great Pumpkin, the Wikipedia article “Halloween” says that liturgically,

It begins the three-day observance of Allhallowtide, the time in the liturgical year dedicated to remembering the dead, including saints (hallows), martyrs, and all the faithful departed.

From the tenor of many Halloween costumes, it seems that Halloween is a time to remember not only the dead but also death itself. But, intriguingly, Halloween comes at death in a light-hearted way—as if to say: yes, we’ll all die, but what of it?

Should we fear death? As an if-then statement, those convinced they will go to hell should fear death. Those convinced they will go to heaven shouldn’t fear death much.

What about those convinced that death is nonexistence? In his essay “Immortality and the Fear of Death” Jack Sherefkin discuses the ancient symmetry argument that one shouldn’t fear death if it is simple nonexistence:

A more powerful argument used by the Epicureans against the fear of death is the “symmetry” argument. This was probably first used by Lucretius, a Roman disciple of Epicurus. Lucretius argued since we do not feel horror at our past non-existence, the time before we were born, it is irrational to feel horror at our future non-existence, the time after our death, since they are the same. Or as Seneca expressed it: “Would you not think him an utter fool who wept because he was not alive a thousand years ago? And is he not just as much a fool who weeps because he will not be alive a thousand years from now? It is all the same; you will not be and you were not. Neither of these periods of time belongs to you.”

Some version of the symmetry argument has been put forth by Cicero, Plutarch, Seneca, Schopenhauer and Hume. Hume cited Lucretius’ argument to Boswell, Dr. Johnson’s biographer, when he interviewed Hume on his death bed. “I asked him if the thought of Annihilation never gave him any uneasiness. He said not the least; no more than the thought than he had not been as Lucretius observes.”

If not fear, I think there is a reason to hate death. Death is a key part of the time budget constraint we face. Facing death is like not being richer in time than we are. Among other things, I am annoyed with my own death in the way I am annoyed with having only 24 hours in a day, of which almost a third go to sleep.

We may fear or hate our own death, but some of the sharpest experiences in our lives are likely to be the deaths of others. Most people see the deaths of their own parents—an experience that typically hits them like a ton of bricks. You can sense some of that in my posts about the deaths of my parents:

I have also seen the deaths of my father-in-law, mother-in-law. And, unfortunately, I have seen the deaths of three of our children, which my wife Gail talks about in a guest post:

In the public realm, I have become very much aware of eminent economists important to my own thinking who have died. I have not written posts about all of the economists important to me who have died in the last few years, for example, I was very distressed when Julio Rotemberg died. But I do have some posts on economists important to me who have died:

But Halloween takes even our grief and looks at the bright side. The Mexican counterpart of Halloween is “The Day of the Dead” when the dead come back to visit. But even if the dead are simply remembered, it is a demonstration that death has not sundered our connection utterly. The connection between two human beings is badly, badly frayed when one of them dies. But the connection is not gone. It is still there.

The connection to those who have gone before us is an important one. On the Norlin Library here at the University of Colorado Boulder is the inscription “Who knows only his own generation remains always a child,” which is a tweak on something Cicero wrote. We grow stronger by strengthening our connection to those who are dead.

Let me leave you with my “Daily Devotional for the Not-Yet,” which has a line about our ancestors and others who have died before us. Let us not forget them.

In this moment, as in all the moments I have, may the image of the God or Gods Who May Be burn brightly in my heart.

Let faith give me a felt assurance that what must be done to bring the Day of Awakening and the Day of Fulfilment closer can be done in a spirit of joy and contentment.

Let the gathering powers of heaven be at my left hand and my right. Let there be many heroes and saints to blaze the trail in front of me. Let the younger generations who will follow discern the truth and wield it to strengthen good and weaken evil. Let the grandeur of the Universe above inspire noble thoughts that lead to noble plans and noble deeds. Let the Earth beneath be a remembrance of the wisdom of our ancestors and of others who have died before us. And may the light within be an ocean of conscious and unconscious being to sustain me and those who are with me through all the trials we must go through.

In this moment, I am. And I am grateful that I am. May others be, now and for all time.

An Economist's Halloween

Above is the 2018 Marginal Revolution University Economist’s Halloween video. Below is the 2019 video:

Another Problem with Processed Food: Propionate

In “The Problem with Processed Food” I point out 3 problems with highly processed food:

Most food processing makes food easier to eat and enhances digestibility [which can increase the speed with which it boosts insulin].

The newer the type of food processing, the less tested it is by time.

Food companies have a different objective function than you do—or at least a different objective function than your long-run self does.

Under the category of things “untested by time” is propionate. The article shown above reports on recent research:

Consumption of propionate, an ingredient that’s widely used in baked goods, animal feeds, and artificial flavorings, appears to increase levels of several hormones that are associated with risk of obesity and diabetes …

The study, which combined data from a randomized placebo-controlled trial in humans and mouse studies, indicated that propionate can trigger a cascade of metabolic events that leads to insulin resistance and hyperinsulinemia — a condition marked by excessive levels of insulin. The findings also showed that in mice, chronic exposure to propionate resulted in weight gain and insulin resistance.

Additional details strengthen the story—except for the limitation of the human trials to short-run effects on only 14 people. However, that was enough to show that the mouse results seem relevant to humans:

For this study, the researchers focused on propionate, a naturally occurring short-chain fatty acid that helps prevent mold from forming on foods. They first administered it to mice and found that it rapidly activated the sympathetic nervous system, which led to a surge in hormones, including glucagon, norepinephrine, and a newly discovered gluconeogenic hormone called fatty acid-binding protein 4 (FABP4). This in turn led the mice to produce more glucose from their liver cells, leading to hyperglycemia — a defining trait of diabetes. Moreover, the researchers found that chronic treatment of mice with a dose of propionate equivalent to the amount typically consumed by humans led to significant weight gain in the mice, as well as insulin resistance.

To determine how the findings in mice may translate to humans, the researchers established a double-blinded, placebo-controlled study that included 14 healthy participants. The participants were randomized into two groups: One group received a meal that contained one gram of propionate as an additive and the other was given a meal that contained a placebo. Blood samples were collected before the meal, within 15 minutes of eating, and every 30 minutes thereafter for four hours.

The researchers found that people who consumed the meal containing propionate had significant increases in norepinephrine as well as increases in glucagon and FABP4 soon after eating.

Although I have recommended that the first thing you should do to make your diet healthier is to go off sugar (see for example “3 Achievable Resolutions for Weight Loss” and “Letting Go of Sugar”) I have been careful to point out that as things stand it is hard to distinguish between going off sugar and going off highly processed food, because almost all highly processed food has sugar as an important ingredient. There really could be reasons other than sugar that make processed food bad for you, but if you go off sugar, you will avoid those problems too, because you will be going off most processed food as well.

One claim I have made is that an insulin spike will make someone hungry again. Note that for the producers of processed food, that would typically seem like a plus: there is a reasonable chance that the person eating their food would decide that they were hungry for that particular food, or that, being hungry, it was again most convenient to eat that food. So even if the producer of that processed food were only looking at the sales numbers, they might gravitate toward producing types of processed food that caused insulin spikes. Thus, even without any conscious bad intent, the process of engineering food that people will eat a lot of has some tendency to result in bad outcomes. And that engineering of food happens much, much faster than any human evolution that could blunt bad effects.

Michael Pollan famously said “Eat food, not too much, mostly plants.” This post on Michael Pollan’s statement gives several criteria for something being real food instead of just a food-like substance. If it is real food:

Your great grandmother would recognize it as food.

It doesn’t come out of a container with a long list of ingredients.

It will rot or go bad, and so tends to be on the outer perimeter of grocery stores where it is easier to bring in fresh food of that type.

The biggest two limitations of Michael Pollan’s dictum is that (a) not all plant food is created equal in terms of its health effects, and (b) eating all the time, from morning til night, is problematic. See “Forget Calorie Counting; It's the Insulin Index, Stupid,” “Stop Counting Calories; It's the Clock that Counts” and “Jason Fung's Single Best Weight Loss Tip: Don't Eat All the Time.”

For annotated links to other posts on diet and health, see:

Job Posting for a Full-Time Research Assistant with a Bachelor's Degree to Help with the Research Needed to Build a National Well-Being Index, Starting Late Summer 2020

Our team—Dan Benjamin, Ori Heffetz, Kristen Cooper and I, plus the continuing research assistants Hannah Solheim and Arshia Hashemi—are looking for someone interested in being a research assistant for a couple of years (most likely before going on to a PhD program in Economics) to help us hammer out the principles needed to construct a national well-being index as carefully as GDP is constructed, so that it can stand as a full coequal to GDP. Here is the link telling how to apply:

https://docs.wixstatic.com/ugd/2f9665_84b13338500942af9c85e5e44c5bc699.pdf

I am also involved in work on genoeconomics, for which we also need a full-time research assistant at the same stage:

We advertised both positions on Twitter:

Tushar Kundu was our research assistant 2017–2019. Here is what he has to say:

Hannah Solheim is not on Twitter, but Arshia Hashemi is, and has this to say about his experience so far as a research assistant:

If you are on Twitter yourself, you can ask me questions there: https://twitter.com/mileskimball But the official postings at the links above should make clear what to do next.

Dan, Ori and I, with various coauthors, have published 6 papers in the American Economic Review (3 full-size papers and 3 “Papers and Proceedings” papers) and two in other journals based on our research in the Economics of Happiness, as you can see from my CV.

Update, October 30, 2019: Mark Fabian gives this vote of confidence:

The Virtual Reality Theory of Dualism

Link to the Wikipedia article “Mind-body dualism”

In “On Being a Copy of Someone's Mind” I argued as follows:

… my problem with hardcore dualism is this:

If a spirit or soul influences any of my decisions, then it has enough effect on particles in the brain that it should be detectable by physics with the sensitivity of instruments we have now.

If a spirit or soul is affected by the body but does not itself have any effect on the body (Epiphenomenalism), then it is not through any causality from that spirit or soul the spirit or soul that we talk about because it has no causal pathway to move our mouths. God might make our bodies so they talk about our epiphenominal spirits or souls. But our spirits or souls in this case are not talking about themselves on their own behalf.

However, since then, I have been thinking of a type of dualism that is not particularly unlikely: the idea that the world I and others see all around us is a virtual reality videogame—except that this game involves all of our senses and a clouded memory of our lives outside of the videogame. In such a case, it might be that our avatars (which we think of as our whole selves) do a lot of the routine thinking, but the minds of our true selves who entered the videogame are instantiated in substrates beyond and outside of the familiar quarks, leptons, force particles and Higgs bosons that are part of the warp and woof of the programming of the videogame.

If we are indeed in a suped-up virtual reality videogame, our true selves might only intervene in what our avatars are doing once every few seconds, once every few minutes, once every few ours, or even only once every few days if we choose to mostly go along for the ride, treating the videogame as if it were a movie.

These interventions would, indeed, violate the laws of physics, but it could be relatively hard to set up instruments to successfully detect them. Let me assume for the moment that it is seen as making a more fun virtual reality videogame if it is possible to detect that one is inside a videogame. That is, let me suppose that the videogame isn’t set up to hide from a determined experimenter the fact that one is in a videogame. Still, it would require finding a small (but serious) localized violation of the laws of physics we know (which are the default of the videogame we are in), in a hard-to-predict microscopic location in the brain. That is, supposed that someplace in the brain, every minute or so, as our true selves make a choice about what general direction to go in the game, there is a violation of the laws of physics we know.

There might be a moral dimension to you and I being in a virtual reality videogame. One can just play the game for fun, or one can play the game as a character-building exercise. Having fun during the game might feel good afterward and having tried hard to do good during the game might feel very rewarding once the game is over.

Discussing this, I notice an oddity: many accounts of dualism have one’s spirit or soul that “inside” one’s body. Why can’t one’s spirit or soul be outside the universe, as it is if I and others are in a virtual reality videogame?

One possibly pernicious aspect of the idea that you and I are in a virtuality reality videogame is that we might make a mistake thinking someone is a computer-generated character who is a real person, and fail to treat them with the care they deserve. On the other hand, someone who seems of low status within the videogame might be a very high status real person outside the videogame; that is a reason to treat everyone well—a little like the way the Greeks said gods would come in disguise to test out people’s hospitality.

One thing I want to insist on is that, although some other versions of duality are more traditional, that carefully considered, any type of duality is at least as odd as the idea that we are inside of a virtual reality videogame.

Related Posts:

Where is Social Science Genetics Headed?

Social science genetics is on the rise. The article shown above is a recent triumph. By knowing someone’s genes alone, it is possible to predict 11–13% of the number of years of schooling they have. Such a prediction comes from adding up tiny effects of many, many genes.

Since a substantial fraction of my readers are economists, let me mention that some of the important movers and shakers in social science genetics are economists. For example, behind some of the recent successes is a key insight, which David Laibson among others had to defend vigorously to government research funders: large sample sizes were so important in accurately measuring the effects of genes that it was worth sacrificing quality of an outcome variable if that would allow a much larger sample size. Hence, when they were doing the kind of research illustrated by the article shown above, it was a better strategy to put the lion’s share of effort into genetic prediction of number of years of education than genetic prediction of intelligence, simply because number of years of education was a variable collected along with genetic data for many more people than intelligence was.

One useful bit of terminology for genetics is that other non-genetic information about an individual, such as years of education or blood pressure are called “phenotypes.” Another is that linear combinations of data across many genes intended to be best linear predictors of a particular phenotype are called “polygenic scores.” Polygenic scores have known signal-to-noise ratios, making it possible to do measurement-error corrections for their effects. (See “Adding a Variable Measured with Error to a Regression Only Partially Controls for that Variable” and “Statistically Controlling for Confounding Constructs is Harder than You Think—Jacob Westfall and Tal Yarkoni.”)

Both private companies and government-supported initiatives around the world are rapidly increasing the amount of human genotype data linked to other data (with a growing appreciation of the need for large samples of people over the full range of ethnic origins). For common variations (SNPs) that have well-known short-range correlations, genotyping using a chip now costs about $25 per person when done in bulk, while “sequencing” to measure all variations, including rare ones, costs about $100 per person, with the costs rapidly coming down. Sample sizes are already above a million individuals, with concrete plans for several million more that will be data sets that are quite accessible to researchers.

In this post, I want to forecast where social science genetics is headed in the next few years. I don’t think I am sticking my neck out very much with these forecasts about cool things people will be doing. Those in the field might say “Duh. Of course!” Here are some types of research I think will be big:

Genetic Causality from Own Genes and Sibling Effects. Besides increasing the amount of data on non-European ethnic groups, a key direction data collection will move in the future is to collect genetic data on mother-father-self trios and mother-father-self-sibling quartets. (For example, the PSID is now in the process of collecting genetic data.) Conditional on the mother’s and father’s genes, both one’s own genes and the genes of a full sibling are as random as a coin toss. As a result, given such data, one can get clean causal estimates of the effects of one’s own genes on one’s outcomes and the effects of one’s sibling’s genes on one’s outcomes.

Genetic Nurturance. By looking at the genes of the mother and father that were not transmitted to self, one can also get important evidence on the effects of parental genes on the environment parents are providing. (Here, the evidence is not quite as clean. Everything the non-transmitted parental genes are correlated with could be having a nurture effect on self.) Effects of nontransmitted parental genes are interesting because most things that parents can do other than passing on their genes are things a policy intervention could imitate. That is, the effects of non-transmitted parental genes reflect nurture.

Recognizing Faulty Identification Claims that Involve Genetic Data. One reason expertise in social science genetics is valuable is that questionable identification claims will be made and are being made involving genetic data. While the emerging data will allow very clean identification of causal effects of genes on a wide range of outcomes, the pathway by which genes have their effects can be quite unclear. People will make claims that genes are good instruments. This is seldom true, because the exclusion restriction that genes only act through a specified set of right-hand side variables is seldom satisfied. Also, it is important to realize that large parts of the causal chain are likely to go through the social realm outside an individual’s body.

Treatment Effects that Vary by Polygenic Score. One interesting finding from research so far is that treatment effects often differ quite a bit when the sample is split by a relevant polygenic score. For example, effects of parental income on years of schooling are more important for women who have low polygenic scores for educational attainment. That is, women who have genes predicting a lot of education will get a lot of education even if parental income is low, but women whose genes predict less education will get a lot of education only if parental income is high. The patterns are different for men.

Note that treatment effects varying by polygenic score has obvious policy implications. For example, suppose we could identify kids in very bad environments who had genes suggesting they would really succeed if only they were given true equality of opportunity. This would sharpen the social justice criticism of the lack of opportunity they currently have. Notice that many of the policy implications based on treatment effects that vary by polygenic score would be highly controversial, so knowledge of the ins and outs of ethical debates about the use of genetic data in this way becomes quite important.

Enhanced Power to Test Prevention Strategies. One unusual aspect of genes is that the genetic data, with all the predictive power that provides, are available from the moment of birth and even before. This means that prevention strategies (say for teen pregnancy, teen suicide, teen drug addiction or being a high school dropout) can be tested on populations whose genes indicated elevated risk, which could dramatically increase power for field experiments.

The Option Value of Genetic Data. Suppose one is doing a lab experiment with a few hundred participants. With a few thousand dollars, one could collect genetic data. With that data, one could immediately begin to control for genetic differences that contribute to standard errors and look at differences in treatment effects on experimental subjects who have different polygenic scores. But with the same data, one would also be able to do a new analysis four years later using more accurate polygenic scores or using polygenic scores that did not exist earlier. In this sense, genetic data grows in value over time.

The example above was with genetic data in a computer file that can be combined with coefficient vectors to get improved or new linear combinations. If one is willing to hold back some of the genetic material for later genetic analysis with future technologies (as the HRS did), totally new measurements are possible. For example, many researchers have become interested in epigenetics—the methylation marks on genes that help control expression of genes.

Assortative Mating. Ways of using genetic data that are not about polygenic scores in a regression will emerge. My own genetic research—working closely with Patrick Turley and Rosie Li—has been about using genetic data on unrelated individuals to look at the history of assortative mating. Genetic assortative mating for a polygenic score is defined as a positive covariance between the polygenic scores of co-parents. But one need not have direct covariance evidence. A difference equation indicates that a positive covariance between the polygenic scores of co-parents shows up in a higher variance of the polygenic scores of the children. Hence, data on unrelated individuals shows the assortative mating covariance among the parents’ generation in the birth-year of those on whom one has data. One can go further. When a large fraction of a population is genotyped, the genetic data can, itself, identify cousins. This makes it possible to partition people’s genetic data in a way that allows one to measure assortative mating in even earlier generations.

Conclusion: Why More and Better Data Will Make Amazing Things Possible in Social Science Genetics. One interesting thing about genetic data is that, there is a critical sample size at which it is possible to get good accuracy on the genes for any particular outcome variable. Why is that? after multiple-hypothesis-testing correction for the fact that there are many, many genes being tested, “genome-wide significance” requires a z-score of 5.45. (Note that, with large sample sizes, the z-score is essentially equal to the t-statistic.) But a characteristic of the normal distribution is that at such high z-score, even a small change in z-score can make a huge difference in p-value. A t-score of 5.03 has a p-value ten times as big, and a score of 5.85 has a p-value ten times smaller. That means that in this region, for a given coefficient estimate an 18% increase in sample size is guaranteed to change a p-value by an order of magnitude. Thinking about things the other way around, if there are genes with different sizes of effects distributed normally, if to start with, one can only reliably detect things far out on the normal distribution of effect sizes, then a modest percentage increase in sample size will make a bigger slice of the normal distribution of effect sizes reliably detectable, which will mean identifying many times as many genes as genome-wide significant.

The critical sample size depends on what phenotype one is looking at. Most importantly, power is lower for diseases or other conditions that are relatively rare. The critical sample size at which we will get a good polygenic score for anorexia is much larger than the sample size at which one can get a good polygenic score for educational attainment. But as sample sizes continue to increase, at some point, relatively suddenly, we will be there with a good polygenic score for anorexia. Just imagine if parents knew in advance that one of their children was at particularly high risk for anorexia. They’d be likely to do things differently and might be able to avert that problem.

I am sure there are many cool things in the future of social science genetics that I can’t imagine. It is an exciting field. I am delighted to be along for the ride!

Here are some other posts on genetic research:

Randolph Nesse on Efforts to Prevent Drug Addiction

Criminalization and interdiction have filled prisons and corrupted governments in country after country. However, increasingly potent drugs that can be synthesized in any basement make controlling access increasingly impossible. Legalization seems like a good idea but causes more addiction. Our strongest defense is likely to be education, but scare stories make kids want to try drugs. Every child should learn that drugs take over the brain and turn some people into miserable zombies and that we have no way to tell who will get addicted the fastest. They should also learn that the high fades as addiction takes over.

New treatments are desperately needed.

—Randolph Nesse, Good Reasons for Bad Feelings, in Chapter 13, “Good Feelings for Bad Reasons.”

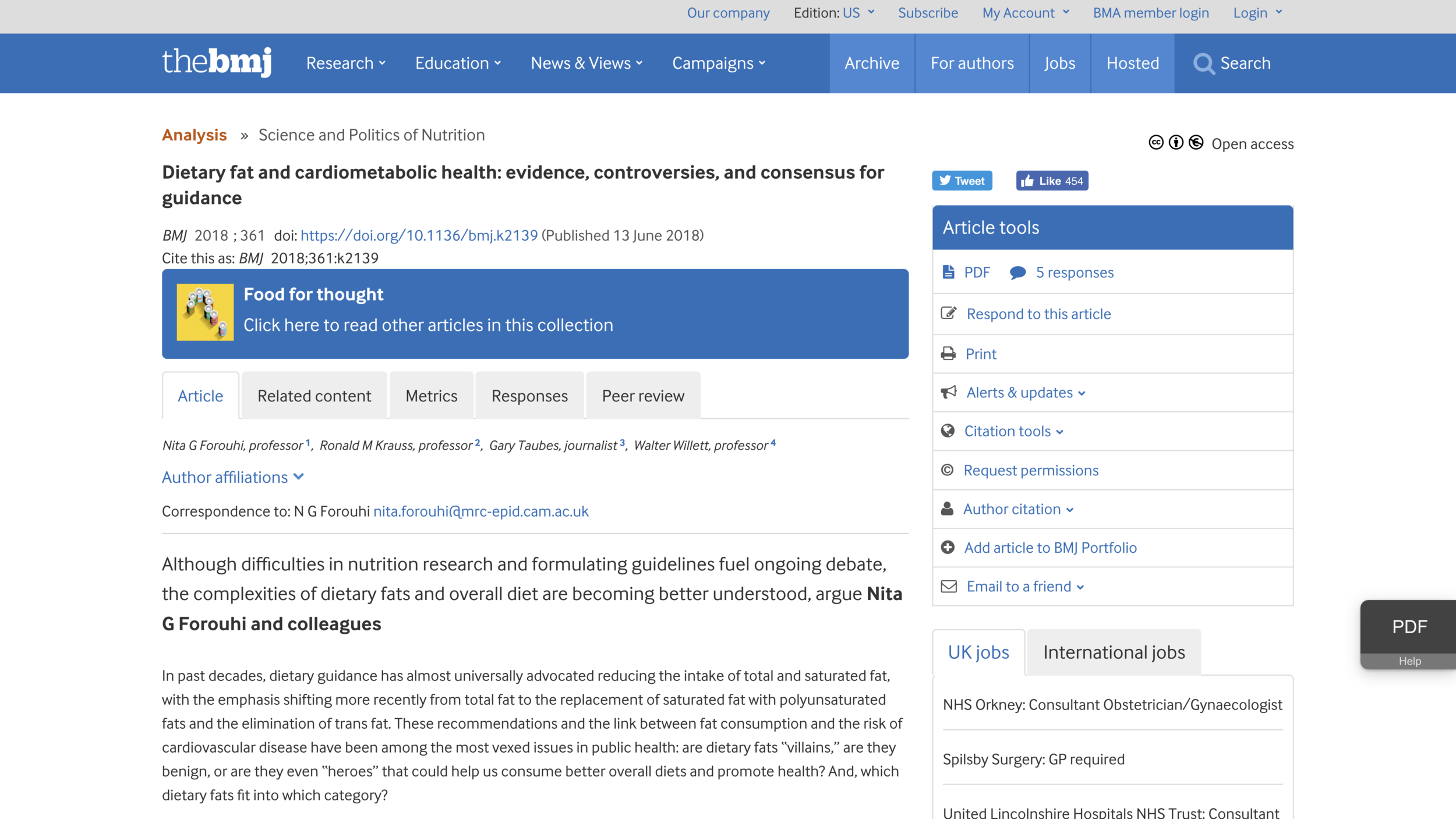

What is the Evidence on Dietary Fat?

Based on an article in “Obesity Reviews” by Santos, Esteves, Pereira, Yancy and Nunes entitled “Systematic review and meta-analysis of clinical trials of the effects of low carbohydrate diets on cardiovascular risk factors,” lowcarb diets look like (on average) they reduce

weight

abdominal circumference

systolic and diastolic blood pressure

blood triglycerides

blood glucose

glycated hemoglobin,

insulin levels in the blood,

C-reactive protein

while raising HDL cholesterol (“good cholesterol”). But lowcarb diets don’t seem to reduce LDL cholesterol (“bad cholesterol”). This matches my own experience. In my last checkup my doctor said all of my blood work looked really good, but that one of my cholesterol numbers was mildly high.

Nutritionists often talk about the three “macronutrients”: carbohydrates, protein and fat. For nutritional health, many of the most important differences are within each category. Sugar and easily-digestible starches like those in potatoes, rice and bread are very different in their health effects than resistant starches and the the carbs in leafy vegetables. Animal protein seems to be a cancer promoter in a way that plant proteins are not. (And non-A2 cow’s milk contains a protein that is problematic in other ways.) Transfats seem to be quite bad, but as I will discuss today, there isn’t clear evidence that other dietary fats are unhealthy.

Between the three macronutrients, I think dietary fat doesn’t deserve its especially bad reputation—and protein doesn’t deserve its especially good reputation. Sugar deserves an even worse reputation than it has, but there are other carbs that are OK. Cutting back on bad carbs usually goes along with increasing either good carbs, protein or dietary fat. Many products advertise how much protein they have; but that is not necessarily a good thing.

Besides transfats, which probably deserve their bad reputation, saturated fats have the worst reputation among dietary fats. So let me focus on the evidence about saturated fat. One simple point to make is that saturated fats in many meat and dairy products tend to come along with animal protein. As a result, saturated fat has long been in danger of being blamed for crimes by animal protein. Butter doesn’t have that much protein in it, but tends to be eaten on top of the worst types of carbs, and so is in danger of being blamed for crimes by very bad carbs.

Part of the conviction that saturated fat is unhealthy comes from the effect of eating saturated fat on blood cholesterol levels. So any discussion of whether saturated fat is bad has to wrestle with the question of how much we can infer from effects on blood cholesterol. One of the biggest scientific issues there is that the number of different subtypes of cholesterol-transport objects in the bloodstream is considerably larger than the number of quantities that are typically measured. It isn’t just HDL cholesterol, tryglicerides and LDL cholestoral numbers that matter. The paper shown at the top of this post, “Systematic review and meta-analysis of clinical trials of the effects of low carbohydrate diets on cardiovascular risk factors,” explains:

Researchers now widely recognise the existence of a range of LDL particles with different physicochemical characteristics, including size and density, and that these particles and their pathological properties are not accurately measured by the standard LDL cholesterol assay. Hence assessment of other atherogenic lipoprotein particles (either LDL alone, or non-HDL cholesterol including LDL, intermediate density lipoproteins, and very low density lipoproteins, and the ratio of serum apolipoprotein B to apolipoprotein A1) have been advocated as alternatives to LDL cholesterol in the assessment and management of cardiovascular disease risk. Moreover, blood levels of smaller, cholesterol depleted LDL particles appear more strongly associated with cardiovascular disease risk than larger cholesterol enriched LDL particles, while increases in saturated fat intake (with reduced consumption of carbohydrates) can raise plasma levels of larger LDL particles to a greater extent than smaller LDL particles. In that case, the effect of saturated fat consumption on serum LDL cholesterol may not accurately reflect its effect on cardiovascular disease risk.

In brief, the abundance of cholesterol-transport object subtypes whose abundance isn’t typically measured separately could matter a lot for health. Some drugs that reduce LDL help reduce mortality; others don’t. The ones that reduce mortality could be reducing LDL by reducing some of the worst subtypes of cholesterol-transport objects, while the ones that don’t work could be reducing LDL by reducing some relatively innocent subtypes of cholesterol-transport objects. As Santos, Esteves, Pereira, Yancy and Nunes write in the article shown at the top of this post:

As the diet-heart hypothesis evolved in the 1960s and 1970s, the focus shifted from the effect of dietary fat on total cholesterol to LDL cholesterol. However, changes in LDL cholesterol are not an actual measure of heart disease itself. Any dietary intervention might influence other, possibly unmeasured, causal factors that could affect the expected effect of the change in LDL cholesterol. This possibility is clearly shown by the failure of several categories of drugs to reduce cardiovascular events despite significant reductions in plasma LDL cholesterol levels.

Another way to put the issue is that cross-sectional variation in LDL (“bad”) cholesterol levels for which higher LDL levels look bad for health may involve a very different profile of changes in cholesterol-transport object subtypes than eating more saturated fats from coconut milk, cream or butter would cause (in the absence of the bad carbs such as bread or potatoes that often go along with butter). Likewise, variation in LDL (“bad”) cholesterol levels associated with statin treatment in which statin drugs look good for reducing mortality may may involve a very different profile of changes in cholesterol-transport object subtypes than eating more saturated fats from coconut milk, cream or butter itself would cause.

The article “Systematic review and meta-analysis of clinical trials of the effects of low carbohydrate diets on cardiovascular risk factors” gives an excellent explanation of the difficulty in getting decisive evidence about the effects of eating saturated fat on disease. The relevant passage deserves to be quoted at length:

These controversies arise largely because existing research methods cannot resolve them. In the current scientific model, hypotheses are treated with scepticism until they survive rigorous and repeated tests. In medicine, randomised controlled trials are considered the gold standard in the hierarchy of evidence because randomisation minimises the number of confounding variables. Ideally, each dietary hypothesis would be evaluated by replicated randomised trials, as would be done for the introduction of any new drug. However, this is often not feasible for evaluating the role of diet and other behaviours in the prevention of non-communicable diseases.

One of the hypotheses that requires rigorous testing is that changes in dietary fat consumption will reduce the risk of non-communicable diseases that take years or decades to manifest. Clinical trials that adequately test these hypotheses require thousands to tens of thousands of participants randomised to different dietary interventions and then followed for years or decades until significant differences in clinical endpoints are observed. As the experience of the Women’s Health Initiative suggests, maintaining sufficient adherence to assigned dietary changes over long periods (seven years in the Women’s Health Initiative) may be an insurmountable problem. For this reason, among others, when trials fail to confirm the hypotheses they were testing, it is impossible to determine whether the failure is in the hypothesis itself, or in the ability or willingness of participants to comply with the assigned dietary interventions. This uncertainty is also evident in diet trials that last as little as six months or a year.

In the absence of long term randomised controlled trials, the best available evidence on which to establish public health guidelines on diet often comes from the combination of relatively short term randomised trials with intermediate risk factors (such as blood lipids, blood pressure, or body weight) as outcomes and large observational cohort studies using reported intake or biomarkers of intake to establish associations between diet and disease. Although a controversial practice, many, if not most, public health interventions and dietary guidelines have relied on a synthesis of such evidence. Many factors need to be considered when using combined sources of evidence that individually are inadequate to formulate public health guidelines, including their consistency and the likelihood of confounding, the assessment of which is not shared universally. The level of evidence required for public health guidelines may differ depending on the nature of the guideline itself.

That is the state of the solid evidence. In terms of common notions that many people, including doctors, have in their heads, one should be aware of the hysteresis (lock-in from past events) in attitudes toward fat. Techniques of measuring overall cholesterol concentrations in the blood became possible in the early 20th century. Tentative conclusions reached then based on what would now clearly be recognized as a too-low-dimensional measurement of cholesterol have continued to effect attitudes even now, the better part of a century later. And the decision of the McGovern committee on dietary guidelines to advise against dietary fat rather than advise against sugar—which was a nonobvious duel among competing experts at the time—became dogma for a long time. Thankfully, the idea that dietary fat is worse than sugar is weakening as a dogma, so there is a chance now for scientific evidence to play a decisive role. If only solid evidence were easier to come by!

No one knows the truth of the matter about dietary fat. And many doctors know less then you do now if you have made it to the end of this post. Where have I placed my bets? Eliminating sugar and other bad carbs from my diet would make my diet too bland for comfort if I didn’t allow a lot of fats. I eat a lot of avocados and a lot of olive oil, which most experts think have quite good fats. (See “In Praise of Avocados.”) But I also eat quite a bit of coconut milk, cream and butter (without the bread or potatoes!) I don’t know if that is OK, but I feel quite comfortable doing so.

In addition to all of the evidentiary problems mentioned above, very few clinical trials look at the effects of what kinds of fats people eat when they are fasting regularly (that is, going without food for extended periods of time). It may be just my optimism, but if saturated fats do have some bad effects, I have some hope that fasting gives my body time to repair any damage that may result.

I dream of a day when there will be funds to do types of dietary research that have been underdone. I think research funding has been skewed toward cure of diseases people already have over research that can help prevention. Hopefully, that skewing will someday be rectified.

For annotated links to other posts on diet and health, see:

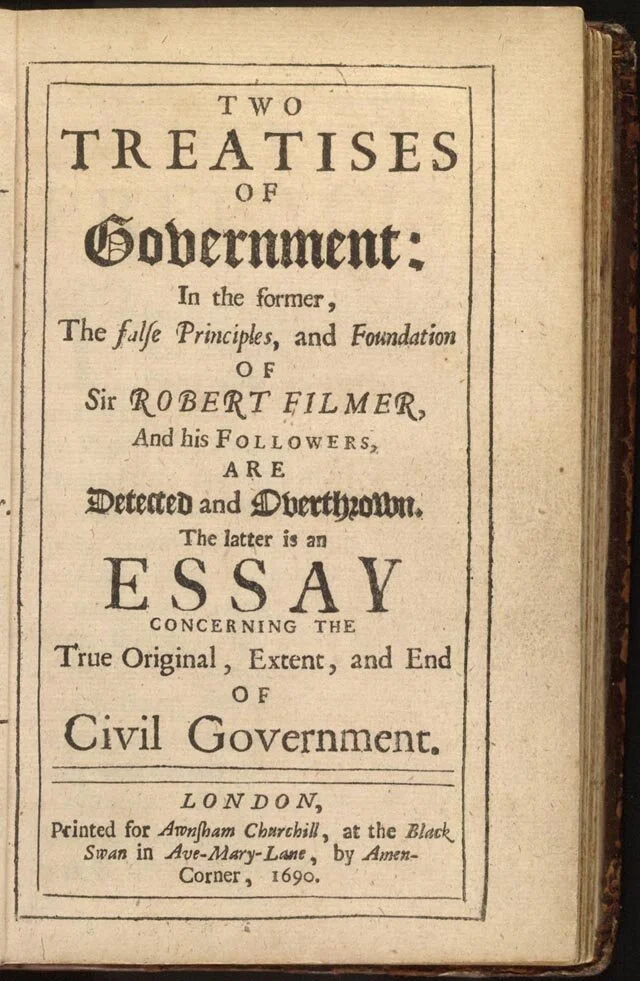

Miles Kimball on John Locke's Second Treatise

I have finished blogging my way through John Locke’s Second Treatise on Government: Of Civil Government, just as I blogged my way through John Stuart Mill’s On Liberty. (See “John Stuart Mill’s Defense of Freedom” for links to those posts.) These two books lay out the main two philosophical approaches to defending freedom: John Stuart Mill’s Utilitarian approach and John Locke’s Natural Rights approach. To me, both of these approaches are appealing, though I lean more to the Utilitarian approach. One way in which I combine these two approaches is by maintaining that no government action that clearly both reduces freedom and lowers overall social welfare is legitimate, regardless of what procedural rules have been followed in its enactment.

In my blog posts about John Locke’s Second Treatise on Government: Of Civil Government and John Stuart Mill’s On Liberty I am not afraid to disagree with John Locke or John Stuart Mill. But I find myself agreeing with each of them much more than I disagree. My own love of and devotion to freedom is much stronger as a result of blogging through these two books. I am grateful to be a citizen of the United States of America, which was, in important measure, founded on the ideas laid out by John Locke. If the United States of America is true to its founding conception, it will be a land of even greater freedom in the future than it is now.

I have organized my blog posts on John Locke’s Second Treatise on Government: Of Civil Government according to five sections of the book. Each of the following is a bibliographic post with links to the posts on that section.

Links to Posts on Chapters I–III: John Locke's State of Nature and State of War

Links to Posts on Chapters IV–V: On the Achilles Heel of John Locke's Second Treatise: Slavery and Land Ownership

Links to Posts on Chapters VI–VII : John Locke Against Natural Hierarchy

Links to Posts on Chapters VIII–XI: John Locke's Argument for Limited Government

Links to Posts on Chapters XII–XIX: John Locke Against Tyranny

In addition to the links within the posts above traversing the Second Treatise in order, I have one earlier post based on John Locke's Second Treatise:

Statistically Controlling for Confounding Constructs is Harder than You Think—Jacob Westfall and Tal Yarkoni

Last week, I posted “Adding a Variable Measured with Error to a Regression Only Partially Controls for that Variable.” Today, to reinforce that message, I’ll discuss the PlosOne paper “Statistically Controlling for Confounding Constructs is Harder than You Think” (ungated), by Jacob Westfall and Tal Yarkoni. All the quotations in this post come from that article. (Both Paige Harden and Tal Yarkoni himself pointed me to this article.)

A key bit of background is that social scientists are often interested not in just prediction, but in understanding. Jacob and Tal write:

To most social scientists, observed variables are essentially just stand-ins for theoretical constructs of interest. The former are only useful to the extent that they accurately measure the latter. Accordingly, it may seem natural to assume that any statistical inferences one can draw at the observed variable level automatically generalize to the latent construct level as well. The present results demonstrate that, for a very common class of incremental validity arguments, such a strategy runs a high risk of failure.

What is “incremental validity”? Jacob and Tal explain:

When a predictor variable in a multiple regression has a coefficient that differs significantly from zero, researchers typically conclude that the variable makes a “unique” contribution to the outcome.

“Latent variables” are the underlying concepts or “constructs” that social scientists are really interested in. This passage distinguishes latent variables from the “proxies” actually in a data set:

And because measured variables are typically viewed as proxies for latent constructs of substantive interest … it is natural to generalize the operational conclusion to the latent variable level; that is, to conclude that the latent construct measured by a given predictor variable itself has incremental validity in predicting the outcome, over and above other latent constructs that were examined.

However, this is wrong, for the reason stated in the title of my post: “Adding a Variable Measured with Error to a Regression Only Partially Controls for that Variable.” Here, it is crucial to realize that any difference between the variable actually available in a data set and the underlying concept it is meant to proxy for counts as “measurement error.”

How bad is the problem?

The scope of the problem is considerable: literally hundreds of thousands of studies spanning numerous fields of science have historically relied on measurement-level incremental validity arguments to support strong conclusions about the relationships between theoretical constructs. The present findings inform and contribute to this literature—and to the general practice of “controlling for” potential confounds using multiple regression—in a number of ways.

Unless a measurement error model is used, or a concept is measured exactly, the words “controlling for” and “adjusting for” are red flags for problems:

… commonly … incremental validity claims are implicit—as when researchers claim that they have statistically “controlled” or “adjusted” for putative confounds—a practice that is exceedingly common in fields ranging from epidemiology to econometrics to behavioral neuroscience (a Google Scholar search for “after controlling for” and “after adjusting for” produces over 300,000 hits in each case). The sheer ubiquity of such appeals might well give one the impression that such claims are unobjectionable, and if anything, represent a foundational tool for drawing meaningful scientific inferences.

Unfortunately, incremental validity claims can be deeply problematic. As we demonstrate below, even small amounts of error in measured predictor variables can result in extremely poorly calibrated Type 1 error probabilities.

…

… many, and perhaps most, incremental validity claims put forward in the social sciences to date have not been adequately supported by empirical evidence, and run a high risk of spuriousness.

The bigger the sample size, the more confidently researchers will assert things that are wrong:

We demonstrate that the likelihood of spurious inference is surprisingly high under real-world conditions, and often varies in counterintuitive ways across the parameter space. For example, we show that, because measurement error interacts in an insidious way with sample size, the probability of incorrectly rejecting the null and concluding that a particular construct contributes incrementally to an outcome quickly approaches 100% as the size of a study grows.

The fundamental problem is that the imperfection in variables actually in data sets as proxies for the concepts of interest doesn’t make it harder to know what is going on, it biases results. If researchers treat proxies as if they were the real thing, there is trouble:

In all of these cases—and thousands of others—the claims in question may seem unobjectionable at face value. After all, in any given analysis, there is a simple fact of the matter as to whether or not the unique contribution of one or more variables in a regression is statistically significant when controlling for other variables; what room is there for inferential error? Trouble arises, however, when researchers behave as if statistical conclusions obtained at the level of observed measures can be automatically generalized to the level of latent constructs [9,21]—a near-ubiquitous move, given that most scientists are not interested in prediction purely for prediction’s sake, and typically choose their measures precisely so as to stand in for latent constructs of interest.

Jacob and Tal have a useful section in their paper on statistical approaches that can deal with measurement error under assumptions that, while perhaps not always holding, are a whole lot better than the assumption than assuming a concept is measured precisely by the proxy in the data for that concept. They also make the point that, after correctly accounting for measurement error—including any differences between what is in the data and the underlying concept of interest—often there is not enough statistical power in the data to say much of anything. That is life. Researchers should be engaging in collaborations to get large data sets that—properly analyzed with measurement error models—can really tell us what is going on in the world, rather than using data sets they can put together on their own that are too small to reliably tell what is going on. (See “Let's Set Half a Percent as the Standard for Statistical Significance.” Note also that preregistration is one way to make results at a less strict level of statistical significance worth taking seriously.) On that, I like the image at the top of Chris Chambers’s Twitter feed:

Dan Benjamin, my coauthor on many papers, and a strong advocate for rigorous statistical practices that can really help us figure out how the world works, suggested the following two articles as also relevant in this context:

Here are links to other posts that touch on statistical issues:

Adding a Variable Measured with Error to a Regression Only Partially Controls for that Variable

Let's Set Half a Percent as the Standard for Statistical Significance

Less Than 6 or More than 9 Hours of Sleep Signals a Higher Risk of Heart Attacks

Eggs May Be a Type of Food You Should Eat Sparingly, But Don't Blame Cholesterol Yet

Henry George Eloquently Makes the Case that Correlation Is Not Causation