A New Engine for Discovery in Economics and Other Social Sciences: RAND's American Life Panel

A few years back, economists and other social scientists and technical experts at RAND and the University of Michigan put together a grant proposal focused on seeing what can be done with web surveys. Thanks to funding provided by the National Institute on Aging (part of the National Institute of Health), we were able to find out the answer. Leaving out many details, the basic answer is that, except for a few things that have to be done in person, web surveys are at least as good, and usually better, than other survey methods. RAND’s American Life Panel arose out of that collaboration (though it is now an independent RAND survey that has a wide range of clients other than government research agencies). I can’t pretend to be objective about the American Life Panel. As part of a large team, I have been involved in it from the beginning and I love it.

An important distinction has to be made between commercial web surveys, which use samples of convenience (often trying to match certain broad demographic frequencies to the population as a whole) and scientific web surveys that make great efforts to get as close to a representative sample as possible–even on characteristics that are unmeasured. The American Life Panel is just such a scientific web survey. Every effort is made not only to draw respondents randomly from the population as a whole, but also to give web access to those randomly chosen who don’t already have web access.

By contrast to most surveys, which fairly soon became calcified under the weight of a standard set of questions that are asked again and again, taking up most of the available survey time, under Arie Kapteyn’s leadership, the American Life Panel (ALP) has grown in power and reach under a unique philosophy of experimental modules initiated in a relatively decentralized way that over time add up to much more than the sum of the parts. At this point, data from a huge variety of experimental modules can now be compared to data on ALP respondents that duplicates most of what is collected from respondents to Michigan’s Health and Retirement Study and data that duplicates a big subset of what is collected from respondents to Michigan's Cognitive Economics Study. Arie’s commitment to supporting “bold, persistent experimentation” in surveys augurs well for the future of the American Life Panel.

Because the American Life Panel has only recently come into its own, most economists don’t realize what is there, what can be done with the existing data on the ALP, and what can be done by collecting new experimental data to combine with the ALP’s existing data. For young economists in particular, I am confident there are many, many dissertations hiding in the data already collected, aside from everything that is coming.

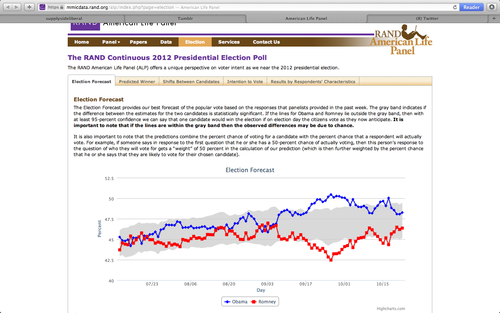

Just for fun, I have put a link under the illustration to the ALP’s election forecast webpage, based on survey questions that probe for probabilities as opposed to discrete answers–a style of survey question that has been advocated most forcefully by Chuck Manski and his coauthors. Also, unlike typical election polls, the results you see above and at the election forecast webpage are based on panel data: the same people are asked the questions repeatedly, so that the changes you see are more likely to be genuine changes in opinion, instead of random fluctuations in the set of people surveyed. (Note: the election polling behind the picture above is not supported by any government agency.)

Update: Brad DeLong tweeted to me this interesting comment:

RAND’s reinterview method is a treatment that over time turns low-info voters into high info voters. That’s a powerful bias…

My reaction is that if Brad is right, the views of a high-information sample of otherwise typical voters from a representative sample is itself very interesting. The question that Brad raises is a good example of the value of an experimental survey–to be able to discover and investigate, or rule out, effects such as that.